CogniSafe3D

With the CogniSafe3D project, we are bridging the gap between deterministic industrial safety and the flexibility of AI-driven robotics.

Modern robotic systems increasingly rely on deep learning and adaptive behaviors. However, industrial safety standards require deterministic, certifiable behavior. This creates a fundamental conflict: non-deterministic AI systems must operate within deterministic safety frameworks.

Traditional safety systems often rely on 2D sensing technologies that enforce conservative speed limits or trigger frequent stops—reducing productivity and limiting true collaboration.

CogniSafe3D addresses this challenge by introducing a cognitive 3D safety architecture that combines deterministic safety guarantees with AI-enhanced environmental understanding. The goal is to enable adaptive, certifiable safety without sacrificing efficiency.

CogniSafe3D utilizes external high-resolution 3D LiDAR to monitor shared workspaces and builds a layered safety concept containing a Deterministic Foundation and a Cognitive Layer.

Deterministic Foundation

- Processing of 3D point clouds using Signed Distance Fields (SDF)

- Reliable, safety-rated violation detection

- Deterministic spatial safety boundary enforcement

- Real-time risk monitoring independent of AI components

This foundation ensures compliance with current safety standards and provides certifiable baseline behavior.

Cognitive Layer

- AI-based Human Pose Estimation (HPE)

- Predictive algorithms for human intent and motion forecasting

- Context-aware risk evaluation

- Dynamic adjustment of robot behavior

The cognitive layer enhances the deterministic core by anticipating hazards before they occur.

CogniSafe3D Goals:

- Functional Safety: to design and validate a certifiable safety architecture compliant with ISO 10218:2025 and ISO/TS 15066

- Cybersecurity: design and integrate cybersecurity mechanisms aligned with ISA/IEC 62443 to ensure secure, resilient operation of connected robotic systems in industrial environments

- Scalable integration into ROS 2–based robotic systems

Industrial Impact

- Resolves the conflict between AI-driven flexibility and certifiable safety requirements

- Reduces unnecessary emergency stops through predictive risk assessment

- Increases productivity in shared workspaces

- Shifts from reactive shutdowns to proactive hazard mitigation

Funding Acknowledgement: The CogniSafe3D project (E! 6085) receives funding under the Eureka-Eurostars program from the German Federal Ministry of Research, Technology and Space (BMFTR grant number 01QE2426A) and the Italian Ministero dell’Università e della Ricerca (MUR).

GRIZZLY

Event-based vision for precision recycling and sustainability.

GRIZZLY develops a next-generation sensor-based sorting system by combining event-based 3D object tracking with hyperspectral material classification. The project addresses a fundamental limitation of current sorting systems: objects move between detection and separation, reducing precision and efficiency.

By fusing a line-scan hyperspectral camera for classification with a novel event-based vision system for real-time tracking, GRIZZLY enables precise trajectory prediction and adaptive actuator control. The result is improved sorting accuracy, higher throughput, and reduced energy consumption in recycling and material processing.

Funding Acknowledgement: The GRIZZLY project (03DPS1146B) is carried out by Fraunhofer IOSB and Proximity Robotics & Automation GmbH and receives funding under the DATIpilot program from the German Federal Ministry of Research, Technology and Space (BMFTR).

Technological Innovation

- Event-based real-time 3D tracking of objects in flight

- Fusion of hyperspectral classification and motion tracking

- Delay-free backtracking for precise actuator timing

- Low-latency data processing with reduced computational load

- Real-time validation of sorting accuracy

Industrial Relevance

- Increased sorting accuracy and purity

- Higher throughput under varying transport speeds

- Reduced energy consumption of separation units

- Improved handling of lightweight and complex materials

- Scalable integration into existing sorting systems

Research Focus

- Calibration and optimization of event-based cameras

- Real-time tracking algorithm development

- Sensor fusion under industrial conditions (dust, vibration, lighting changes)

- Latency analysis across the full activation stack

- Demonstration of superiority over state-of-the-art systems

Energy-Aware Humanoids

Wireless In-Motion Charging for Energy-Aware Humanoid Robots

Humanoid robots are rapidly emerging as a transformative technology across a wide range of application domains, including industrial manufacturing, logistics, healthcare, education, and service robotics. As these systems become more autonomous and capable of complex whole-body motion, their reliance on advanced perception modules, embedded AI computation, and multi-degree-of-freedom actuation continues to grow. This evolution results in significantly increased and highly dynamic energy consumption profiles. Ensuring continuous operation, minimizing downtime, and maintaining energy efficiency therefore represent fundamental challenges for the practical deployment of humanoid robots in real-world environments such as smart factories, warehouses, and hospitals.

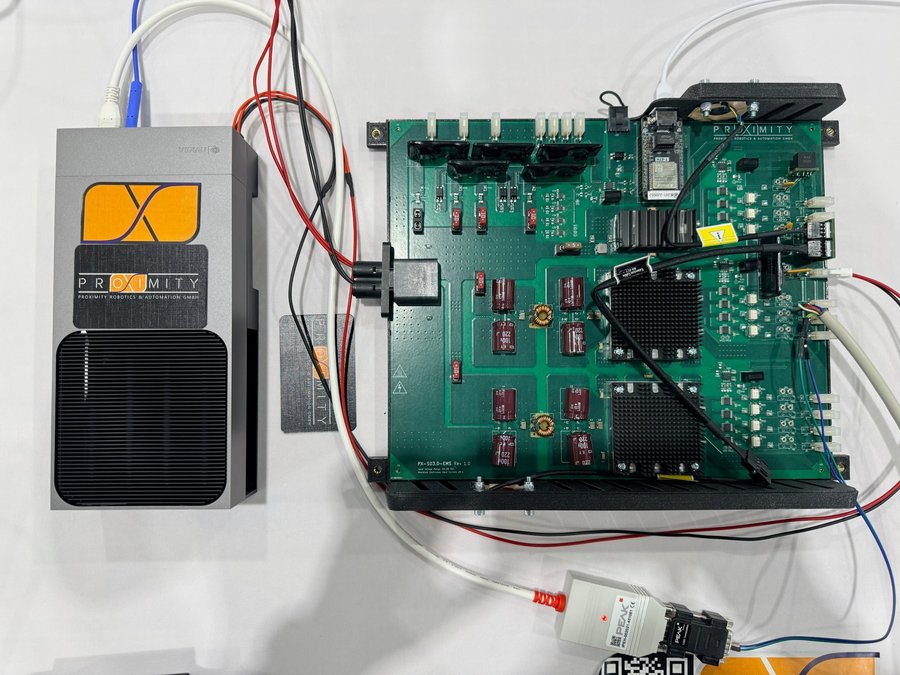

- pxEMS for Energy Profiling of Humanoids

- Sustainable Robotics

- Parameter Optimization using Digital Twins

- Robotic Task Optimization for WPT

- RL-based Locomotion Generation with Energy Constraints

We focus on:

Uninterrupted Operation for Humanoids

- Continuous Energy for Robots: enables sustained humanoid operation without reliance on manual battery swaps or fixed docking stations

- Continuous Energy Management: shifts from discrete charging cycles to ongoing, learning-driven energy optimization to minimize downtime

- Joint Optimization of Mobility & Energy Intake: balances locomotion stability, task performance, and energy harvesting in a unified control framework

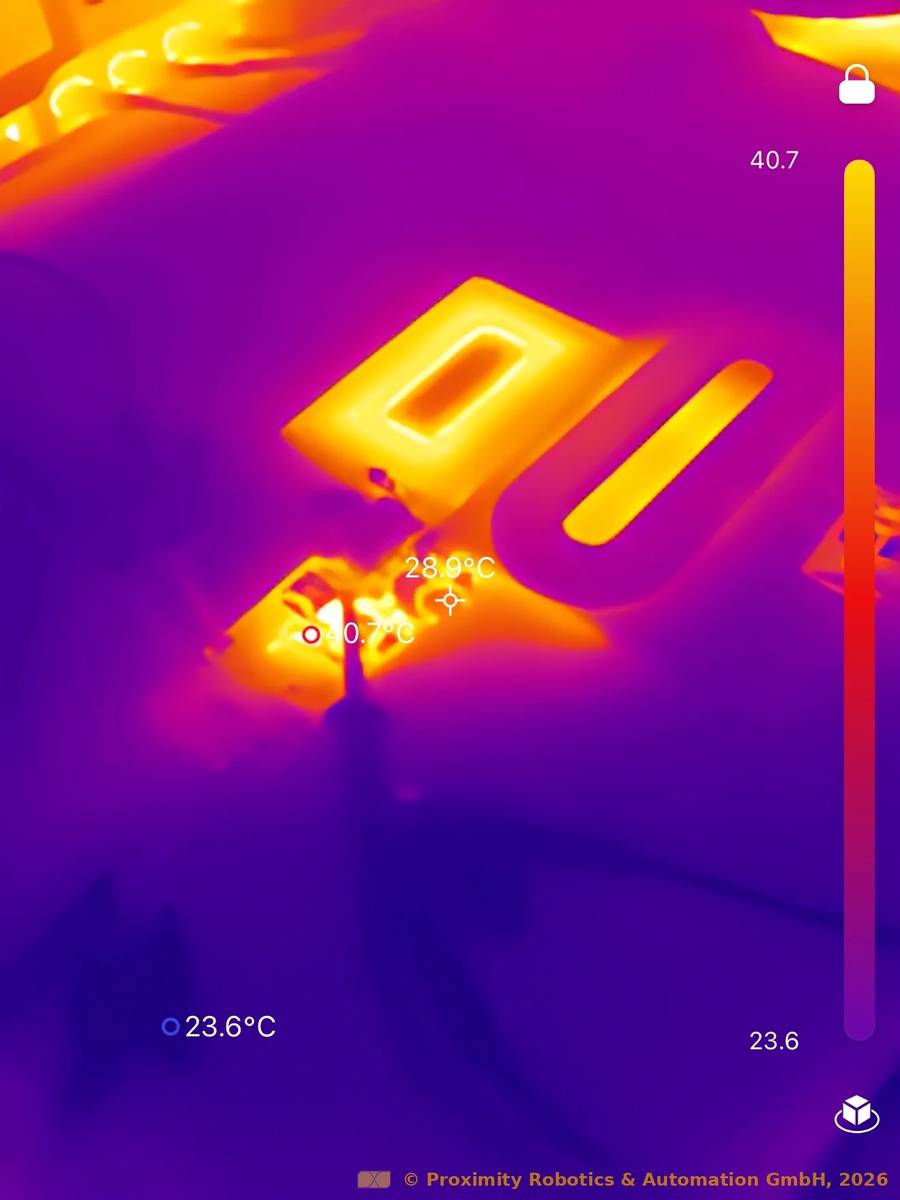

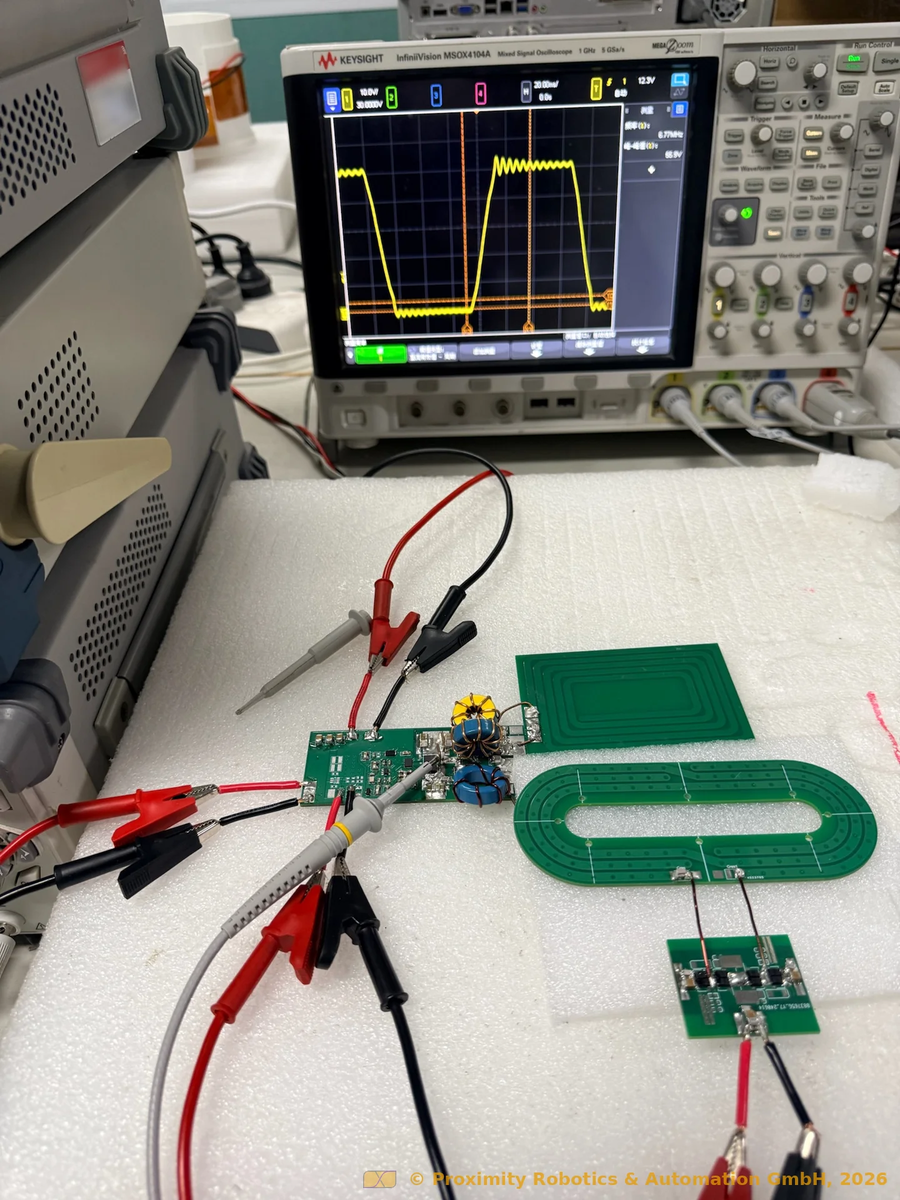

Wireless Power Transfer (WPT)

- MHz-Range Wireless Power Transfer (6.78 MHz): high-frequency WPT enables compact, lightweight, and high-power-density charging systems compared to conventional kHz-range approaches.

- Reduced Size & Weight of Charging Hardware: eliminates bulky ferrite materials, resulting in mechanically robust and humanoid-compatible charging modules.

- In-Motion Charging: supports wireless energy transfer while walking, standing, or performing tasks—without precise docking requirements.

Energy-Aware Control Policies

- Reinforcement Learning–Based Locomotion Adaptation: uses reinforcement learning to adapt gait, posture, and foot placement to improve charging efficiency during motion.

- Charging-Module-Aware Locomotion Optimization: integrates the spatial distribution of WPT transmitters directly into the locomotion policy, enabling robots to exploit high-efficiency regions.

- Energy-Aware Charging Strategies: optimizes charging from a control perspective rather than treating it as a separate subsystem.

Infrastructure-Integrated Energy Systems

- Environment-Integrated Charging Infrastructure: embeds WPT transmitters into floors and workspaces, allowing opportunistic energy replenishment during normal operation

- Seamless Energy Integration: aligns infrastructure and robot intelligence to create cooperative energy ecosystems

- Infrastructure-Level Energy Orchestration: coordinates multiple embedded WPT modules to dynamically allocate charging capacity based on robot position, demand, and task priority.

Battery swapping extends runtime — wireless power transfer enables continuous operation.

Upgrade your humanoid platform to uninterrupted, energy-aware operation. Contact us to explore the possibilities.

Also suitable for mobile robotic systems.

Robotic Touch

Affective touch plays a crucial role in emotional regulation, stress reduction, and social bonding. In rehabilitation contexts, controlled physical interaction can support motor recovery and sensory stimulation. While AI has significantly advanced verbal and visual interaction, replicating meaningful physical interaction remains a major scientific and engineering challenge.

This project explores how robots can deliver touch that is perceived as pleasant, human-like, and emotionally meaningful—while maintaining precise control and safety.

The research builds on controlled human studies evaluating robotic stroking behavior using a 7-DoF robotic arm and validated experimental protocols.

Robotic Touch investigates whether robots can reproduce human-like affective touch and how spatial and temporal motion characteristics influence human perception.

For further details, see our relevant publications:

- Y. Tang, T. Chand, I. Mamaev, B. Hein, and I. Croy, "Evaluation of the human-like robotic touch: A user study," in Proc. Int. Conf. Rehabil. Robot. (ICORR), 2025, pp. 1353–1360

- M. Eckstein, I. Mamaev, B. Ditzen, and U. Sailer, “Calming Effects of Touch in Human, Animal, and Robotic Interaction—Scientific State-of-the-Art and Technical Advances,” Frontiers in Psychiatry, vol. 11, 2020, doi: 10.3389/fpsyt.2020.555058

Recent publications

We actively advance robotics through ongoing research, development, and collaboration. Building on the company’s academic roots and long-standing international research partnerships, we contribute regularly to the scientific community, with publications at established venues such as IROS, ICRA, and CASE.

Our work spans a broad range of domains, including classical control and AI-based methods such as imitation learning and reinforcement learning, bimanual and dual-arm manipulation, collision avoidance, and robotic perception using RGB-(D) cameras, 3D LiDARs, event-based cameras, and capacitive sensors. We apply these methods to mobile platforms, manipulators, and humanoid robot, with a strong focus on safety-related research such as proactive hazard prediction, dynamic risk assessment, and related topics.

Through participation in research and teaching activities, we keep our technology portfolio up to date and transfer experience from international R&D and innovation projects into practical applications.

List of publications:

2026

- F. Plahl, G. Katranis, K. Alba, F. Wolny, S. Vock, A. Morozov and I. Mamaev, "OmniABiD: Evaluating Sim2Real Transferability in Safety and Risk Monitoring of Human-Robot Collaboration using NVIDIA Omniverse," in Proc. 17th Eur. Rob. Forum (ERF), 2026, to appear.

2025

- Y. Ma, Z. Jin, Q. Liu, I. Mamaev, and A. Morozov, "Deep Learning-based Proactive Hazard Prediction for Human-Robot Collaboration with Sensor Malfunctions," in Proc. IEEE/RSJ Int. Conf. Intell. Robots Syst. (IROS), 2025.

- F. Plahl, G. Katranis, I. Mamaev, and A. Morozov, "LiHRA: A LiDAR-based HRI dataset for automated risk monitoring methods," in Proc. IEEE/RSJ Int. Conf. Intell. Robots Syst. (IROS), 2025.

- Y. Tang, X. Huang, Y. Zhang, T. Chen, I. Mamaev, and B. Hein, "ETA-IK: Execution-time-aware inverse kinematics for dual-arm systems," in Proc. IEEE/RSJ Int. Conf. Intell. Robots Syst. (IROS), 2025.

- Y. Tang, T. Chen, B. Hein, and I. Mamaev, "Improving feasibility and safety of nonlinear MPC with control barrier function via learning-based non-convex reachable sets," in Proc. IEEE 21st Int. Conf. Autom. Sci. Eng. (CASE), 2025.

- M. Ruhe, K. Alba, M. Kipfmueller, and I. Mamaev, "Simulation-to-reality hyperparameter optimization of MPPI controllers via Bayesian optimization in NVIDIA Omniverse Isaac Sim," in Proc. IEEE 21st Int. Conf. Autom. Sci. Eng. (CASE), 2025.

- Y. Tang, T. Chand, I. Mamaev, B. Hein, and I. Croy, "Evaluation of the human-like robotic touch: A user study," in Proc. Int. Conf. Rehabil. Robot. (ICORR), 2025, pp. 1353–1360.

- G. Katranis, F. Plahl, J. Grimstadt, I. Mamaev, S. Vock, and A. Morozov, "Dynamic risk assessment for human-robot collaboration using a heuristics-based approach," in Proc. 35th Eur. Saf. Reliab. Conf. (ESREL), 2025, pp. 1830–1837.

2024

- Y. Ma, J. Liu, I. Mamaev, and A. Morozov, "Multimodal failure prediction for vision-based manipulation tasks with camera faults," in Proc. IEEE/RSJ Int. Conf. Intell. Robots Syst. (IROS), 2024.

- A. Zachariae, F. Plahl, Y. Tang, I. Mamaev, B. Hein, and C. Wurll, "Human-robot interactions in autonomous hospital transports," Robot. Auton. Syst., vol. 179, p. 104755, 2024.

- Y. Tang, I. Mamaev, and B. Hein, "Enhancing logistics automation: Integrating capacitive proximity and tactile sensors for trolley pose and center of mass estimation," in Proc. IEEE 20th Int. Conf. Autom. Sci. Eng. (CASE), 2024.

2023

- Y. Tang, I. Mamaev, J. Qin, C. Wurll, and B. Hein, "Reachability-aware collision avoidance for tractor-trailer system with non-linear MPC and control barrier function," in Proc. IEEE/RSJ Int. Conf. Intell. Robots Syst. (IROS), 2023.

- A. A. Attar, T. Fabarisov, A. Morozov, M. Artelt, and I. Mamaev, "Hybrid lightweight deep learning-based error detection model on edge computing devices," in Proc. IEEE 28th Int. Conf. Emerg. Technol. Fact. Autom. (ETFA), 2023, pp. 1–4.

- Y. Tang, W. Shen, I. Mamaev, and B. Hein, "Towards flexible manufacturing: Motion generation concept for coupled multi-robot systems," in Proc. IEEE 19th Int. Conf. Autom. Sci. Eng. (CASE), 2023.

- M. Käppler, I. Mamaev, H. Alagi, T. Stein, and B. Deml, "Optimizing human-robot handovers: The impact of adaptive transport methods," Front. Robot. AI, vol. 10, p. 1155143, 2023.

2022

- T. Fabarisov, A. Morozov, I. Mamaev, and P. Grimmeisen, "Fidget: Deep learning-based fault injection framework for safety analysis and intelligent generation of labeled training data," in Proc. IEEE 27th Int. Conf. Emerg. Technol. Fact. Autom. (ETFA), 2022, pp. 1–6.

- Z. Gyenes, I. Mamaev, D. Yang, E. G. Szádeczky-Kardoss, and B. Hein, "Motion planning for mobile robots using the human tracking velocity obstacles method," in Proc. Int. Conf. Inform. Control Autom. Robot. (ICINCO), 2022, pp. 484–491.

- X. Ye, W. Shen, I. Mamaev, T. Bertram, M. Bryg, M. Schwartz, S. Hohmann, T. Asfour, B. Hein, M. Kipfmueller, J. Kotschenreuther, "Multi-level optimization approach for multi-robot manufacturing systems," in Proc. 54th Int. Symp. Robot. (ISR Europe), 2022, pp. 1–8.

2021

- T. Fabarisov, A. Morozov, I. Mamaev, and K. Janschek, "Deep learning-based error mitigation for assistive exoskeleton with computational-resource-limited platform and edge tensor processing unit," in Proc. ASME Int. Mech. Eng. Congr. Expo. (IMECE), 2021.

- Y. Tang, I. Mamaev, H. Alagi, B. Abel, and B. Hein, "Collision avoidance for mobile robots using proximity sensors," in Interactive Collaborative Robotics (ICR), 2021, pp. 205–221.

- I. Mamaev, D. Kretsch, H. Alagi, and B. Hein, "Grasp detection for robot to human handovers using capacitive sensors," in Proc. IEEE Int. Conf. Robot. Autom. (ICRA), 2021, pp. 12552–12558.

2020

- M. Eckstein, I. Mamaev, B. Ditzen, and U. Sailer, “Calming Effects of Touch in Human, Animal, and Robotic Interaction—Scientific State-of-the-Art and Technical Advances,” Frontiers in Psychiatry, vol. 11, 2020, Art. 555058, doi:10.3389/fpsyt.2020.555058

- T. Fabarisov, I. Mamaev, A. Morozov, and K. Janschek, “Model-based Fault Injection Experiments for the Safety Analysis of Exoskeleton System,” in 30th European Safety and Reliability Conference (ESREL 2020), 2020.

- Looking for an SME research partner? Contact us.