Physical AI Tooling

pxRobotLearning

pxRobotLearning is an end-to-end platform for developing, training, validating, and deploying Physical AI systems. It combines simulation-first development, data collection, imitation learning, reinforcement learning, and optimized inference into a single, coherent pipeline that scales from research to real-world deployment.

- Simulation-Centered Learning Pipeline

- Flexible Learning Algorithms

- Sim-to-Real Adaptation & Fine-Tuning

- Production-Ready Deployment & Multimodal Learning

What We Deliver

Isaac Sim–Based Simulation Pipeline

- High-fidelity simulation foundation

The learning pipeline is built on Isaac Sim, providing physically realistic environments for robotic interaction and data generation - Scalable training environments

Supports large-scale parallel simulation for efficient policy training and evaluation - Flexible scene and sensor configuration

Enables rapid setup of robot models, sensors, and task scenarios

Reinforcement Learning & Imitation Learning Algorithms

- Multiple RL algorithm options

Provides a selection of state-of-the-art reinforcement learning algorithms suitable for different robotic tasks - Imitation learning from demonstrations

Supports learning from expert data collected via teleoperation or scripted policies - Unified training framework

Allows RL and IL methods to be combined or switched seamlessly within the same pipeline

Multi-Physics Sim-to-Sim Transfer

- Cross-engine validation

Supports sim-to-sim transfer across PhysX, Newton, and MuJoCo to improve generalization - Physics-aware robustness testing

Exposes policies to varying dynamics, contacts, and constraints - Reduced simulator bias

Mitigates overfitting to a single physics engine

Sim-to-Real Fine-Tuning

- Progressive domain adaptation

Fine-tunes policies trained in simulation using real-world data - Bridging the reality gap

Addresses discrepancies in dynamics, sensing, and actuation - Safe and efficient deployment

Enables gradual transfer from simulation to physical robots

High-Performance Deployment with ONNX & TensorRT

- Standardized model export

Converts trained models to ONNX for framework-independent deployment - TensorRT-optimized inference

Achieves low-latency, high-throughput execution on edge and embedded GPUs - Production-ready runtime stack

Designed for stable and scalable robotic deployment

Vision–Language Models & Vision–Language–Action Learning

- Multimodal perception and reasoning

Integrates vision and language understanding for complex tasks - Vision–Language–Action (VLA) policies

Enables robots to map high-level instructions to low-level actions - Fine-tuning for robotic domains

Adapts pretrained multimodal models to real robotic environments and tasks

The Robot Learning Platform delivers an end-to-end workflow that connects simulation, learning, adaptation, and deployment into a unified system. By combining high-fidelity simulation, flexible learning algorithms, robust sim-to-real transfer, and production-ready deployment with advanced multimodal learning capabilities, the platform enables scalable and reliable development of intelligent robotic behaviors across a wide range of real-world applications.

pxPerception

pxPerception is a high-precision perception platform for mobile and industrial robots operating in complex, real-world environments. It provides robust spatial understanding through tightly integrated sensing and perception pipelines, enabling reliable localization, mapping, and interaction even under challenging conditions such as low light, clutter, or dynamic scenes.

Built with simulation-first validation and real-to-virtual workflows in mind, pxPerception supports Digital Twin–based development and accelerates the transition from perception research to production-ready systems.

- High-Precision Perception Solution for Mobile & Industrial Robots and Humanoids

- Designed for low-light, warehouse, and industrial environments with robust sensing performance

- Enables high-accuracy spatial perception for navigation, localization, and interaction

- Provides an open development platform for robotic perception integration and customization

- Supports digital twin–based simulation and synthetic data generation

What We Deliver

LiDAR–IMU Integrated Perception & Localization

- Tightly coupled LiDAR–IMU integration

IMU is synchronized with LiDAR sensing to provide motion and pose information alongside point cloud acquisition - Motion-aware point cloud generation

Inertial measurements compensate for motion distortion during scanning, improving data quality under dynamic movement - Fusion with point cloud–based localization

Inertial constraints are combined with geometric matching to enhance localization robustness and accuracy - Stable performance in challenging conditions

The integrated design improves reliability in fast motion, sparse geometry, and partially degenerate environments

CUDA-Accelerated SLAM

- CUDA-based GPU acceleration

Core SLAM components are parallelized on the GPU for real-time processing of dense point clouds - High-performance scan matching and state estimation

Accelerated registration and estimation enable low-latency operation in large-scale environments - SDF-based map reconstruction

Supports TSDF and ESDF representations for dense 3D mapping and scene modeling - Mapping for planning and collision checking

Generated maps can be directly used by downstream planning and control modules

Point Cloud–Based Detection & Segmentation

- Native 3D perception on point clouds

Perception operates directly on 3D data, independent of lighting and visual appearance - From detection to segmentation

Provides object detection, semantic segmentation, and instance-level understanding - Geometric and learning-based methods

Combines classical geometry with data-driven models for robust perception - Rich semantic understanding of environments

Enables robots to identify obstacles, structures, and functional regions in complex scenes

pxTeleopForceXR

- AR/VR teleoperation with force feedback + custom visual and force guidance

- Factr/Robot as leader, Meta Quest 3 / Apple Vision Pro as XR device

- Dataset collection in simulation: Isaac Sim for first-phase prototype testing

- Isaac Lab integration for training with human corrections/interventions

- ZeroMQ and DDS middleware as communication alternative

Getting XR teleoperation with force feedback to feel “right” is rarely a single-step process — especially when tasks, tools, and prototype hardware change frequently. At the same time, visual imitation learning and VLA approaches demand high-quality datasets across many tasks, while reinforcement learning often benefits from Human-in-the-Loop corrections that inject human intention as a stabilizing signal during training.

PxForceXRTeleop simplifies this loop with an integrated AR/VR + force-feedback teleoperation stack featuring custom visual and force guidance. On the operator side, it supports a leader arm setup—using FACTR as a template—and can also pair with robot arms as haptic devices when higher-fidelity force feedback is needed.

For first-phase testing and rapid iteration, PxForceXRTeleop leverages NVIDIA Omniverse Isaac Sim to validate interaction and guidance while collecting structured demonstrations for dataset generation. For HIL-RL, it targets Isaac Lab, enabling training workflows where humans provide interventions and corrections efficiently.

Integration is kept modular and deployment-friendly via ZeroMQ (high-throughput streaming) and DDS middleware (real-time messaging), with configurable runtime behavior for scaling, filtering, safety limits, guidance strategies, and haptic profiles.

Simulation Tooling

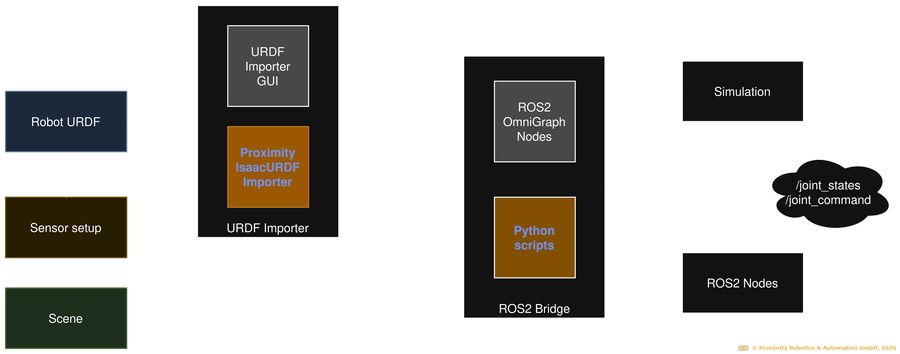

pxIsaacSimURDFImporter

Getting a robot into simulation is rarely a single-step process. Especially during early prototyping, when components, sensors, or kinematic structures change frequently, maintaining a consistent simulation model quickly becomes time-consuming and error-prone.

The pxIsaacSimURDFImporter simplifies this process by automating the import of robots into NVIDIA Omniverse Isaac Sim directly from their URDF/xacro descriptions. Instead of manual asset adjustments for every iteration, engineers can initialize a complete simulation scene with a single command—making the setup process both faster and more reproducible.

- Imports robots into Isaac Sim directly from URDF/xacro files

- Launches complete simulation scenes with a single command

- Integrates ROS-ready sensors, joint states, and ros2_control

- Provides extensible and customizable open-source architecture

Seamless URDF Integration

Automatically import robots from their URDF/xacro descriptions into Isaac Sim—preserving and extending structure, parameters, and sensor configurations with high fidelity.

ROS 2 Native Compatibility

Integrate smoothly with existing ROS-based simulation and control workflows. The import supports joint states, sensor topics, and ROS 2 controllers out of the box.

Flexible & Reproducible Prototyping

Iterate quickly without repetitive manual setup. Configure import parameters as needed and generate consistent, simulation-ready environments with every build.

The pxIsaacSimURDFImporter is open source and actively maintained, with ongoing development to support newer Isaac Sim and ROS releases.

Explore the source on GitHub: PX_IsaacSim_URDF_Importer, or test it using our Slamdog 3.0 mini robot with PX_IsaacSim_URDF_Importer_SLAMDOG3.

Hardware

Slamdog 3.0 mini

Slamdog 3.0 mini is a ROS 2-native mobile robot designed as a modular reference platform for research, teaching, and applied robotics development. Built as a modular system, it allows easy adaptation to different applications, sensor configurations, and hardware setups while maintaining reproducible workflows between simulation and real hardware.

Rather than targeting a fixed application, Slamdog 3.0 mini provides a flexible foundation for validating algorithms, system architectures, and robotic concepts under realistic conditions.

Key Features

- Modular architecture

- ROS 2-native open-source software stack

- Omnidirectional drive + differential drive emulator

- Simulation-ready design: fully supported and open-source NVIDIA Isaac Sim model

Technical Specs

- Dimensions (LxWxH): 400x400x334 mm (incl. handles)

- Weight: approx. 20 kg (incl. battery)

- Payload: 20 kg

- Max speed: 1.7 m/s

Hardware Options

- NVIDIA Jetson AGX as onboard controller

Run perception, robotics, and learning-based applications directly on the robot. - 2D / 3D LiDARs

Slamtec, Seyond, RoboSense - RGB-D Cameras

Intel RealSense D455 / D455f

Stereolabs ZED series - Event-Based Cameras

IDS uEye XCP-E (IMX636)

Teaching Use Case

- Open-source, ROS 2-native platform - ready to use!

- Fully integrated Digital Twin

Available in NVIDIA Omniverse Isaac Sim, enabling students to start in simulation and transition seamlessly to real hardware (github). - Available as a self-assembly kit

Supports hands-on learning in system integration, mechanics, electronics, and software.

Energy-aware Robotics

- Integrated energy measurement (optional)

pxEMS provides real-time monitoring of power consumption during experiments and tasks. - Energy-aware education

Enables teaching of energy profiling, comparison of algorithms, and energy-efficient system design. - Research-ready

Supports reproducible energy measurements across different hardware and software configurations.

Still not convinced?

Experience the SLAMDOG 3.0 mini in simulation through the NVIDIA Omniverse Isaac Sim integration: github - PX_IsaacSim_URDF_Importer_SLAMDOG3

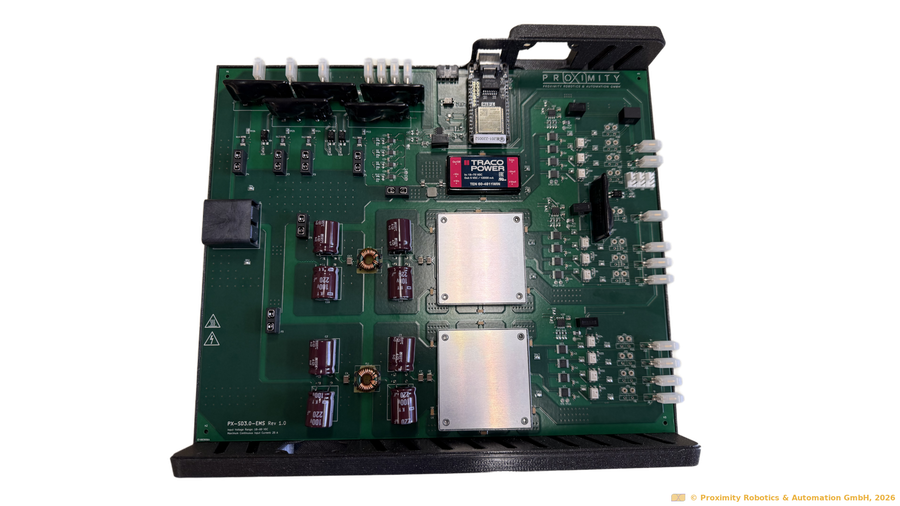

pxEMS

pxEMS is a hardware-based Energy Management System designed to make energy consumption a measurable, controllable, and optimizable parameter in robotic and Physical AI systems.

Modern robots integrate powerful compute, rich sensing, and complex actuation—often without clear visibility into where energy is actually consumed. pxEMS closes this gap by making energy consumption measurable and actionable through high-resolution, multi-channel energy monitoring and control. The system continuously tracks energy flows across up to 16 independent channels, providing detailed insight into how individual subsystems consume power during real tasks, enabling energy-aware development from simulation and Proof of Concept to deployment.

Fully integrated into ROS 2 environments, pxEMS allows energy efficiency to be considered alongside performance, safety, and reliability—supporting sustainable, data-driven robotics.

Key Features

- Up to 16 channels for monitoring power consumption of compute, sensors, actuators, and subsystems

- Multiple voltage levels: battery voltage, 24V, 12V and 5V

- Seamless integration into ROS2 environments via micro-ROS

Typical Use Cases

- Energy profiling of mobile robots, manipulators, and humanoids

- Energy-aware controller and policy optimization

- Validation of energy models in Digital Twins

- Sustainability-driven automation projects

- Research and industrial R&D environments

Application Areas

Sustainability is becoming a defining requirement for modern robotic systems. As robots integrate increasingly powerful compute, sensing, and actuation, energy efficiency must be treated as a core design parameter rather than an afterthought.

- we support academic research in energy-aware robotics through a free pxEMS loan program. Contact us for details.

- Robot and automation companies can use pxEMS to validate sustainability claims and increase operational time per battery charge—get in touch to learn more.

pxSafeRemote

pxSafeRemote is a safety-certified remote control designed for robotic systems — when safety requirements exceed what consumer-grade controllers can provide.

Fully integrated into ROS 2, pxSafeRemote enables reliable long-range operation while meeting industrial safety standards for Emergency Stop and Control signals. It provides a secure bridge between experimental systems and safety-compliant operation, ensuring that early-stage automation and robotic systems can be evaluated without compromising personnel or compliance.

- Emergency stop and safe control functions up to Category 3, PL e / SIL 3

- Reliable wireless operation beyond 100 m, suitable for industrial and outdoor environments

- ROS 2 native integration

- Integrated 2.1″ graphic display and vibration feedback for clear system status and alerts

- Suitable for scenarios where safety requirements evolve faster than the system itself

Bridges Poc and Safety Compliance

Enables continued testing and commissioning when safety requirements emerge — without stopping development.

No “Bluetooth Joystick” Compromises

Purpose-built for robotics projects where consumer controllers are no longer acceptable.

Plug-and-Play for Omniverse and ROS 2–based Robots

Integrates directly into existing Omniverse Simulation, ROS 2 control, and safety architectures.

Interested in testing or integrating this remote controller into your project? Contact us for further information.

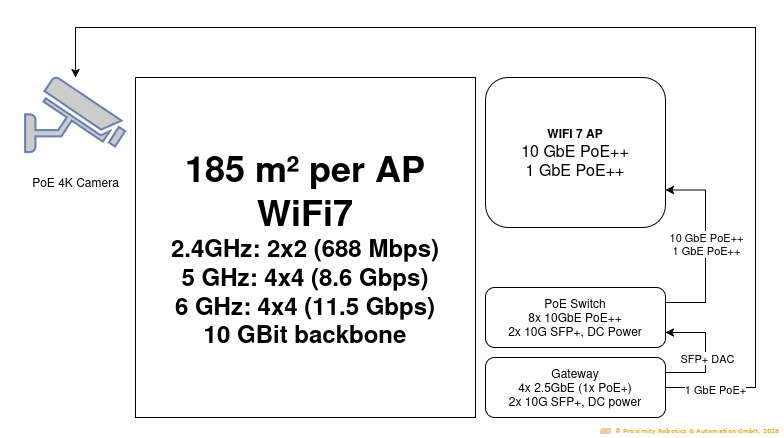

pxMobileNet

High-Performance Network Infrastructure for Robotics Demos & Exhibitions

pxMobileNet is a portable, pre-configured network infrastructure designed for robotics demonstrations, exhibitions, and short-term deployments where radio environments are crowded, unpredictable, and hostile.

Trade fairs and demo environments often suffer from interference, overloaded WiFi, and unstable connectivity—putting robotics demos at risk. pxMobileNet provides a controlled, high-performance communication backbone that ensures reliable operation when it matters most.

Available as a rental service or project-specific deployment.

What pxMobileNet Includes

- WiFi 7 access points with high client capacity and fast roaming

- 10 GbE backbone with PoE++ for cameras, APs, and edge devices

- Support for high-bandwidth sensors (4K PoE cameras, LiDARs)

- Preconfigured QoS profiles for robotics workloads

- Gateway and switching infrastructure optimized for ROS 2 / DDS traffic

Key Advantages

- Robust operation in hostile RF environments

- No dependency on venue WiFi

- Fast setup and teardown

- Known, tested performance characteristics

- Reduced stress for demo teams

- Uninterrupted, Sustainable Power Supply

Optional Add-Ons

- On-site setup and support

- RF & Network monitoring during the event

- Integration with robot and sensor systems

- Private addressing and Secure VPN Remote Access

- 5G Connectivity

- Satellite Internet Access

- AI-Enabled Surveillance & Site Monitoring

- M2.2230 WiFi7 modules for edge devices (Jetson AGX)

Datasets & Open Research Assets

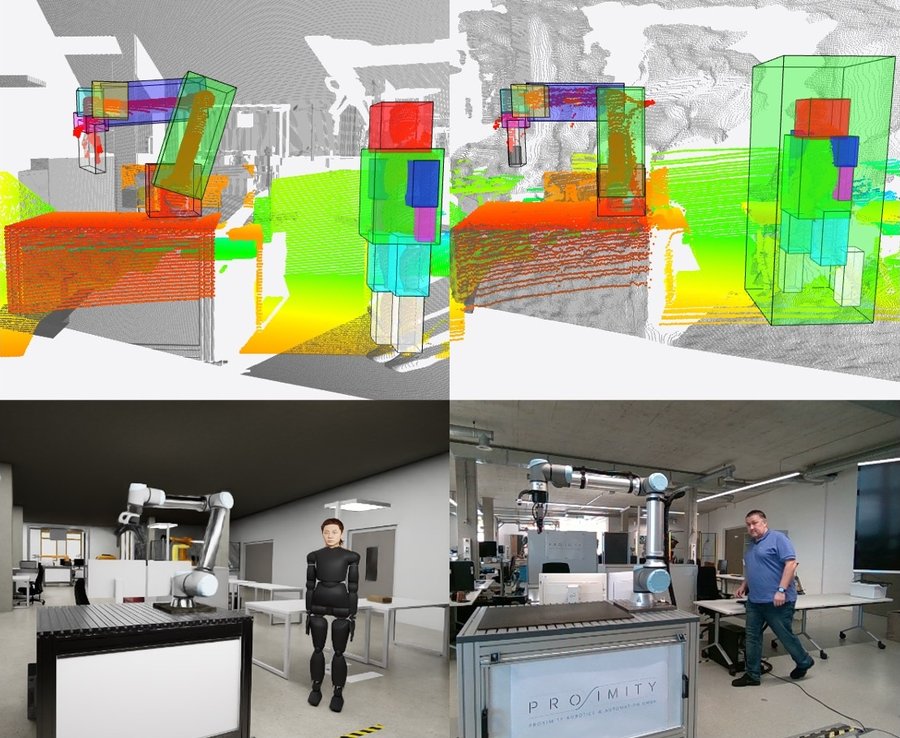

OmniABiD Dataset

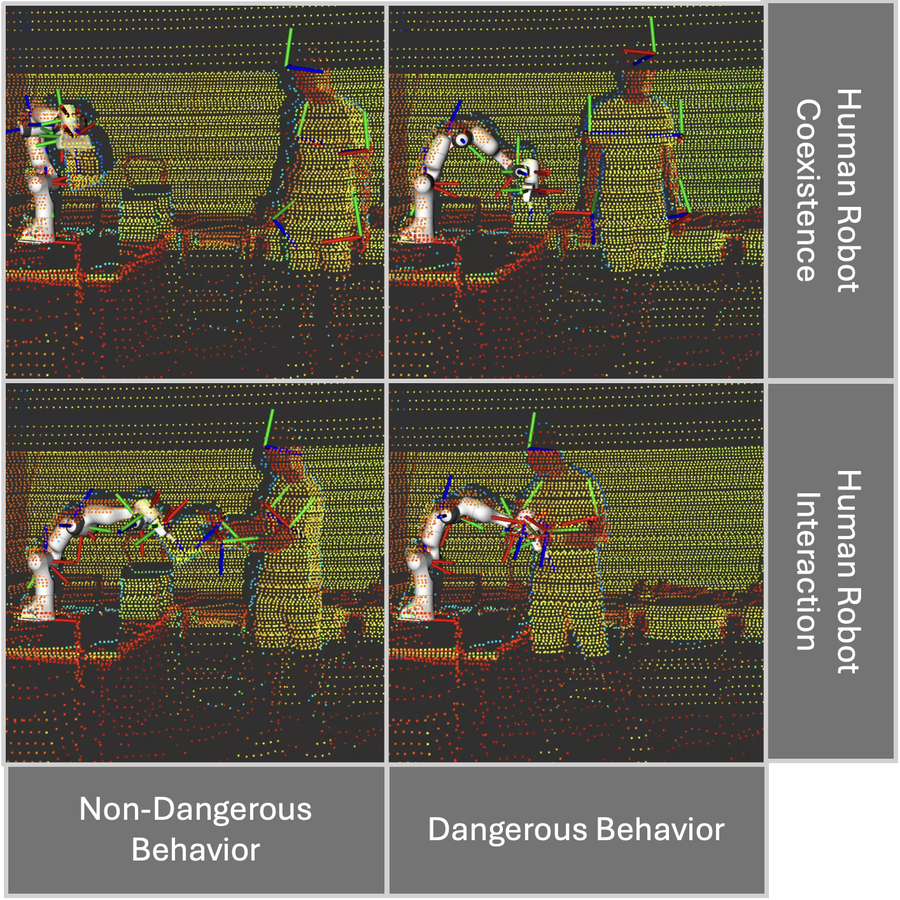

OmniABiD is an open dataset designed to evaluate safety and risk monitoring methods in industrial Human–Robot Collaboration (HRC). It combines high-fidelity simulated scenarios (NVIDIA Omniverse Isaac Sim) with real-world recordings, enabling systematic comparison of algorithms across simulation and reality in consistent interaction settings.

- Industrial HRC scenarios in simulation and real environments for sim-to-real evaluation

- Focus on hazard analysis and risk monitoring in collaboration/coexistence tasks

- Suitable for benchmarking risk metrics and safety-related perception/monitoring pipelines

Benchmarking sim-to-real transfer of safety monitoring methods

Evaluating risk monitoring in realistic industrial interaction patterns (handover/collaboration/coexistence)

Training/validation datasets for safety-aware perception and monitoring pipelines

Check out our publications:

- F. Plahl, G. Katranis, K. Alba, F. Wolny, S. Vock, A. Morozov and I. Mamaev, "OmniABiD: Evaluating Sim2Real Transferability in Safety and Risk Monitoring of Human-Robot Collaboration using NVIDIA Omniverse," in Proc. 17th Eur. Rob. Forum (ERF), 2026, to appear.

Learn more about our work on safety in robotics with the CogniSafe3D project.

Explore OmniABiD on GitHub: OmniABiD@github

LiHRA Dataset

LiHRA is an open dataset for automated risk monitoring in Human–Robot Interaction (HRI), centered on high-resolution 3D LiDAR. It combines 3D point clouds, human keypoints, and robot joint states to support real-time risk detection and quantification methods. The dataset contains 4,400+ labeled frames and includes both intentional contact and unintentional collision events to enable evaluation under safety-critical conditions.

- LiDAR-centric dataset for risk monitoring, with aligned human + robot state information

- Includes high-resolution point clouds, human keypoints, and robot joint states

- 4,400+ labeled frames with both collision and contact scenarios

- Supports development of AI-driven safety monitoring and proactive risk assessment methods

Check out our publications:

- F. Plahl, G. Katranis, I. Mamaev, and A. Morozov, "LiHRA: A LiDAR-based HRI dataset for automated risk monitoring methods," in Proc. IEEE/RSJ Int. Conf. Intell. Robots Syst. (IROS), 2025.

- G. Katranis, F. Plahl, J. Grimstadt, I. Mamaev, S. Vock, and A. Morozov, "Dynamic risk assessment for human-robot collaboration using a heuristics-based approach," in Proc. 35th Eur. Saf. Reliab. Conf. (ESREL), 2025, pp. 1830–1837.

Learn more about our work on safety in robotics with the CogniSafe3D project.

Explore LiHRA on GitHub: LiHRA@github